Estimating Hydrofoil Ride Height with Computer Vision

Ride height is a critical control variable for high-performance hydrofoiling craft. For the control system to adjust surfaces and foil angles effectively, it relies on continuous, real-time height feedback. If that measurement is noisy or delayed, the boat can experience increased drag or unstable flight conditions.

Currently, systems typically rely on ultrasonic ranging or LiDAR to measure this distance. However, out on the water, both methods have serious practical drawbacks. Ultrasonic sensors are highly sensitive to spray, aerated water, and wind. In highly turbulent flows, they often just capture average surface characteristics rather than the actual dynamic surface. LiDAR is faster and higher resolution, but its performance degrades due to reflections from bubbles and droplets.

To address these limitations, my team and I proposed a vision-based ride-height estimation method. The idea is simple: mount a high-speed camera to capture the hydrofoil mast, and use a computer vision algorithm to segment the mast and detect the air-water interface.

Technical Stack

- Languages: Python 3.12

- Data Generation: Blender 3D, BlenderProc

- Machine Learning: TensorFlow/Keras, PyTorch

- Source Code: GitHub Repository

The Challenge: Creating the Data

You can’t train a model without data, and for this specific problem, none existed.

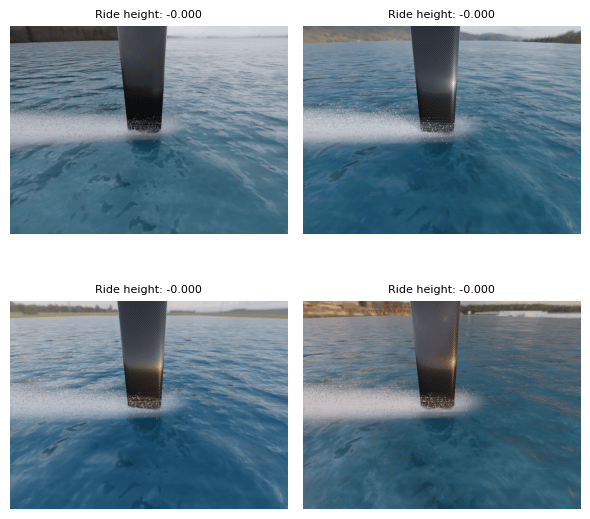

To get around the “no data” problem, we built a procedural generation pipeline using BlenderProc. This allowed us to synthetically generate thousands of photorealistic images across varying novel environments and lighting conditions.

We placed a simulated camera pointing down toward the water at an approximately 56-degree angle, mimicking a GoPro Hero 5 mounted to the hull. To make the water interaction look realistic without melting our hardware, we used a geometry node-based particle system connected to a dynamic paint system to simulate the wake and water spray.

Generating the dataset took roughly 8.5 seconds per image on an NVIDIA RTX 5070 Ti, resulting in 3200 images in about 7.5 hours.

The Data Preprocessing Trick

The initial 3200 .hdf5 files totaled about 3.0 GB. Handling thousands of small files is incredibly computationally expensive for the CPU during training.

To fix this, we used WebP compression. It provided a massive compression ratio while keeping acceptable visual quality. We consolidated everything into a single HDF5 file, dropping the total size from 3.23 GB to just 0.12 GB, a 96.21% space savings.

Building the Models

For the machine learning side of things, we treated this strictly as a regression problem, mapping an RGB image directly to a normalized scalar ride height between 0 and 1.

ResNet-18 Regression Baseline

For our baseline, we adopted the well-established ResNet-18 architecture. ResNet uses deep residual learning, meaning each block learns a correction rather than a whole new transformation, following the logic .

Because we only cared about a single continuous ride-height output, we stripped out the final classification head and replaced it with a single-output regression layer. We tracked the Mean Absolute Error (MAE) and minimized the mean squared error (MSE) over 20 epochs using an Adam optimizer.

The Custom CNN

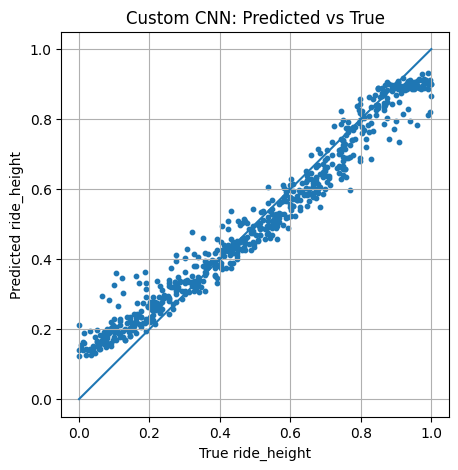

In parallel, we built a custom convolutional neural network in TensorFlow/Keras. The goal here was to see if a much lighter, task-specific architecture could match the performance of the bulky ResNet backbone.

Initially, a simple CNN resulted in unacceptably high MAE. To fix this, we updated the model to use residual layers. This significantly improved performance, though it did increase the complexity of the custom model.

Testing and Results

So, did it work? Yes, but with some caveats regarding environmental changes.

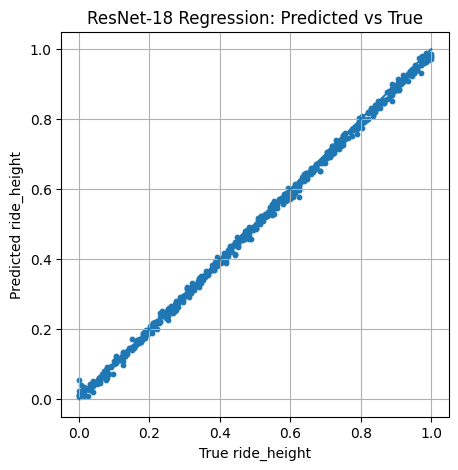

When we evaluated the ResNet-18 baseline on data that shared the same background/environment as the training set, the predicted vs. actual correlation was incredibly tight. The model learned a strong mapping from the RGB images to the ride height.

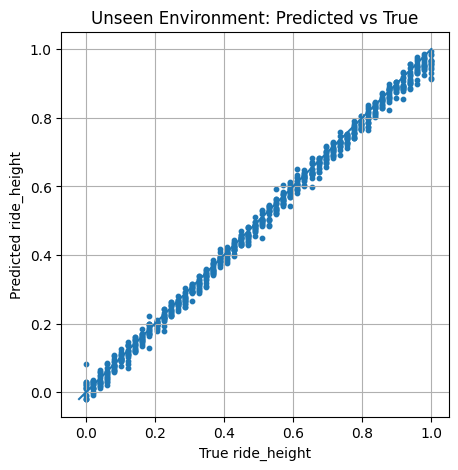

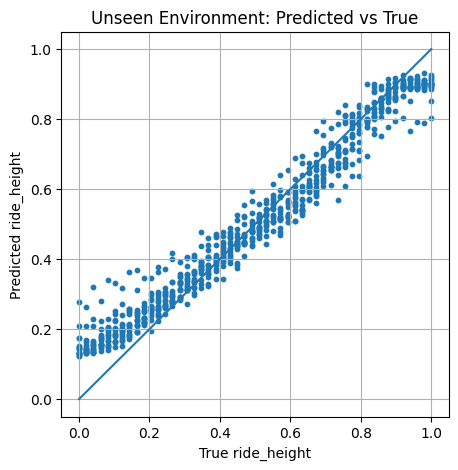

However, the real test was introducing novel background data (unseen HDRIs and different lighting). When we threw new environments at both models, the spread widened significantly. The models were slightly sensitive to scene appearance (lighting, context) rather than just the mast-water interface.

Despite the wider spread, both models maintained a strong positive trend, proving they were extracting the right physical cues. Below are the correlation charts for both models across seen and unseen environments.

Performance Summary (MAE):

| Model | Data Split | MAE |

|---|---|---|

| ResNet-18 | Seen | 0.0153 |

| ResNet-18 | Unseen | 0.0155 |

| Custom CNN | Seen | 0.0538 |

| Custom CNN | Unseen | 0.0550 |

Full Technical Paper

For a deep dive into the methodology, synthetic data generation, and mathematical foundations of this vision-based approach, you can read our full technical paper below.

TIP

Prefer reading in a separate tab? Open the PDF here.

Conclusion and Limitations

Overall, the project successfully demonstrated that machine-vision regression is a viable, low-cost pathway for estimating hydrofoil ride height. The ResNet-18 architecture provided an incredibly strong baseline, while the custom CNN achieved competitive behavior with lower complexity.

That being said, this remains a proof of concept. The biggest limitation is that the models were trained entirely on synthetic Blender data. While we varied the lighting and wake, it doesn’t perfectly capture the chaotic reality of unpredictable weather, heavy real-world spray, and camera vibrations.

Future work will require real-data evaluation and hardware integration testing to close the synthetic-to-real gap. But for now, getting a neural network to calculate depth from a simulated GoPro is a massive step forward.

Comments (...)

Loading comments...

Leave a Comment

Could not post comment. Please check your inputs.